It’s been more than four months since Donald Trump was inaugurated as the 45th president of the United States, and librarians and data scientists are hard at work to preserve government research they fear could be lost or removed by his administration.

The effort began at University of Toronto and Van Pelt Library at the University of Pennsylvania prior to Trump’s inauguration and has since spread to as many as two dozen universities and libraries across the US and Canada.

The fear that government research and information—particularly that produced by the Environmental Protection Agency, NASA, and the National Oceanic and Atmospheric Administration—could be lost is not unfounded. It happened under the administration of former Canadian Prime Minister Stephen Harper, who according t news reports allowed fishery and oceanographic data to be destroyed after taking office in 2006.

It can happen here, too, says Dawn Walker, a PhD student at the Faculty of Information at University of Toronto, who has helped organize data recovery events at various universities.

Data scientists are using two methods to gather the publicly available data from government websites. Web crawlers scan websites and collect information and vital data sets for storage. But the more complex data sets require computer-savvy individuals—some of whom call themselves “baggers”—to write custom computer code to collect the information. Many of those scripts can be used on different pages, according to Walker. She notes that the Environmental Data and Governance Initiative—a network of academics and nonprofits working to preserve government data—has created an extension that can be added to the Google Chrome web browser that allows users to nominate data sets for archiving.

The data undergoes a thorough review process to ensure the integrity of the information collected, she says. The data sets are then downloaded to a repository at datarefuge.org, where the information is publicly available.

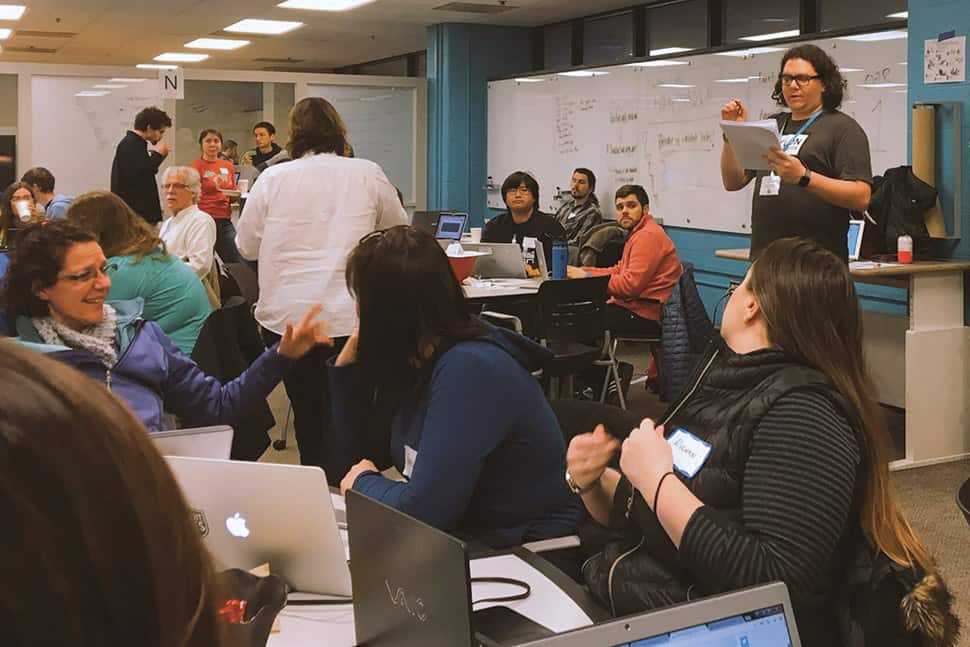

Justin Schell, director of Shapiro Design Lab at the University of Michigan’s Shapiro Undergraduate Library and organizer of a data rescue event, says he first learned of the guerrilla archiving events after reading about the University of Toronto’s hackathon in December. He says he’s been working on the project every day since.

Schell says collecting the data is only part of the challenge in preserving it. Verifying its validity and organizing, describing, and ensuring that the information is in an accessible format are just as vital, he says.

Those tasks entail, in many cases, contacting government officials, scientists, academics, and others who are knowledgeable about climate data. That includes cross-referencing data with studies available at data repositories like the Interuniversity Consortium for Political and Social Research, one of the largest in the world, Schell says.

It’s not uncommon for those collecting research and scientific data to end up with multiple and differing copies of the same information, Schell says. He says that leaves archivists and librarians to answer the question: “Which one is verifiable?”

“We’re trying to better understand what’s in these data sets to make sure we’re not just getting part of the picture,” he says, adding that effort takes “a lot of networking.”

Walker says librarians also have been instrumental in the “bagging” process, leading the conversation about best practices in archiving and preserving the material.

“It’s really exciting to see how this has been a way for people with expertise in a variety of areas to come together [on the issue],” she says. “Now there’s a public interest in [archiving the data], and a lot of people are looking for ways to continue that momentum.”